Hidden Forces Podcast 210 - A.I. Future: Utopia or Apocalypse? Ten Visions For Our Future | Kai-Fu Lee

Primer: How will our future unfold with AI? In this episode of the Hidden Forces Podcast, host Demetri Kofinas speaks with Kai-Fu Lee on his latest book AI 2041: Ten Visions for Our Future. It is a non-fiction book that uses fictional stories as thought experiments to help them understand the most important technologies over the next 20 years.

Background

CEO of Sinovation Ventures, a leading Chinese tech venture firm

Formerly the president of Google China and a senior executive at Microsoft, SGI, and Apple

New York Times bestselling author of AI Superpowers

Has a new book titled AI 2041: Ten Visions for Our Future

Describing His New Book

💡 Demetri describes Kai-Fu’s new book as a non-fiction book that uses fictional stories as thought experiments to help them understand the most important technologies over the next 20 years. Is this a fair description?Agrees with the definition

Fictional stories make an intimidating subject like AI more understandable and entertaining to people

Has a co-author, Chen Qiufan, who is a well-known science fiction writer

Choosing The Scenarios For The Book

Had to brainstorm the technologies that he wanted to cover in the book

Need to be covered in the sequence from easy to hard

Wanted to connect the technologies to different industries like healthcare, education, etc.

Book is not just about AI. It includes quantum computing, blockchain, drug discovery, energy, materials, climate, etc.

Had to leave out gene editing and CRISPR because they couldn’t fit that into the story

Misconceptions People Have About AI

People think that programmers program AI using if-then-else rules. This was true 30 years ago and is no longer true today

In the last 5-10 years, a new subfield of AI called machine learning became the dominant subsector of AI

Machine learning works by taking a large amount of data and figuring out how to make decisions from the data. It does not require any human to micromanage and set the rules

The Opening Story

💡 The opening story revolves around a family in Mumbai who signed up for a deep learning-enabled insurance program. The family uses a series of applications intended to improve their lives in a way that their insurance premium is reduced.What he wanted readers to take away from the story is that people are concerned about large companies with a lot of data

In the story, the company is larger than the likes of Google and Facebook

A second point he wanted to highlight is that even when the owner of the AI has their interest aligned with the policy buyer, things can still go wrong

What Are Some Of The Ethical And Technical Challenges Associated With Implementing This Type Of Learning Function?

Often, the engineers are not aware that they are building technologies that brainwash us or cause us to see things and think in certain ways

“Because the engineer is thinking, hey, I work for a large internet company that want to program the content so that users click more. It seems completely reasonable. (...) but what people are missing is that AI is so powerful, that when you tell it to go do one thing, it maniacally focuses on that and will do so to such a degree of optimality and perfection, that it can cause other bad things to happen.”

- Kai-Fu Lee

CEOs, engineers, and product managers have to realize the powerful weapon that they have in their hands and build it carefully. It’s not just about how much money the company makes

Do We Have To Make Decisions About Who We Can Trust With Making These Decisions? How Do These Individuals Make The Right Decisions?

There’s no way that it can be a perfect answer

Even before AI, humans have made lots of errors in biased and unfair decisions. Should not assume that without AI, everything would be perfect

There should be regulations in place to prevent bad behaviour

Tools can be used to catch problems (e.g. if an AI researcher does not use sufficient women in their training data and becomes biased against women, the AI should be able to detect that and inform the researcher)

There can be social and market mechanisms that act as watchdogs for companies that misbehave (e.g. metric of how much fake news in every social media)

How Vulnerable Are These Systems To Attack?

There are a number of ways that bad people can get in:

Feeding the wrong training data to AI

When AI is being run, people find a fragility and do something with the inputs to trick it (e.g. putting tapes on a stop sign causing autonomous vehicles to no longer recognize it as a traffic sign)

How Autonomous Vehicles Work

Autonomous vehicles take a bunch of inputs and use that to decide how to manipulate the steering wheel, the brake, the accelerator, etc.

Inputs are obtained from the many cameras and sensors that are in an autonomous vehicle

It also uses deep learning to recognize various subjects — pedestrians, cars or trucks, stop signs and traffic lights, etc.

In constrained environments, AI can do it better than people (e.g. forklifts inside a warehouse)

AIs can do a great job for trucks on highways as it is a relatively constrained environment

AI does better in well-lit conditions

AI does badly in longtail scenarios where there’s not enough data/simulations to train them (e.g. driving in the night with heavy snow, downtown with pedestrians walking about)

Start with constrained environments —> go to less constrained environments —> reach a complete replacement for humans

Is The Ability To Work Well In Environments Of Uncertainty A Way To Define Intelligence?

That’s one of the expectations of intelligence

Thinks that someday AI would be able to work in such environments

Possible to list 30-40 things that we used to think made humans intelligent. For the past 10 years, AI has been overtaking humans in some of these tasks

Managing Liabilities

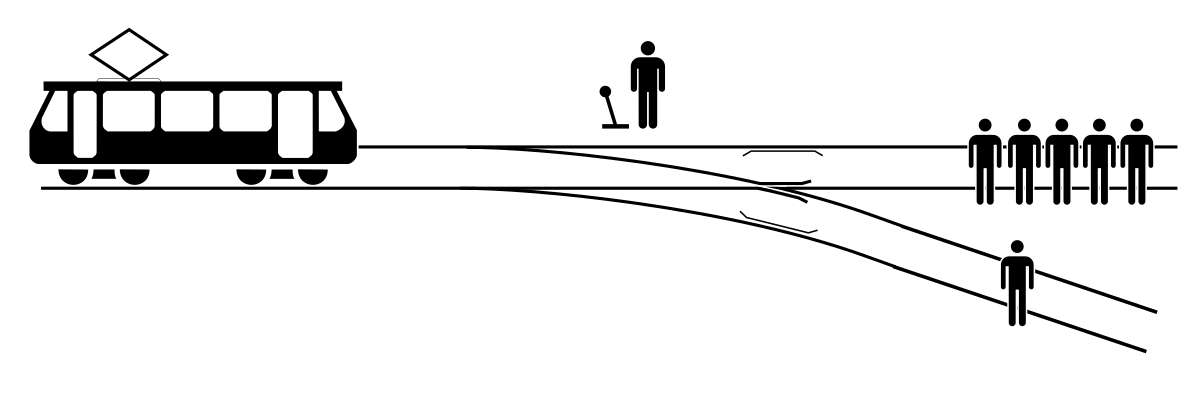

Brought up the Trolley problem — a dilemma that the driver faces where the trolley would hit two people if it continues straight ahead but if the driver pulls the lever and switch it to another track, it would only hit one person

The autonomous vehicle problem is akin to the Trolley problem. AI will be making decisions where lives may ride on that decision

How Will Society And The Government Wrap Their Arms Around Negative Eventualities?

The human race has to accept that AI could deliver a goal that benefits humans overall but unintentionally results in a few casualties

Programmers would have to go back and gather more data on it

More research for AI to approximate human description of why something happened so that humans can understand why a particular decision was made

“It reminds me of an episode we did with Jonathan Haidt, where he wrote the book The Righteous Mind. And I think he said in that book, that decisions come first, strategic reasoning second, so we reason after the fact. Which is kind of scary when you think about it.”

- Demetri Kofinas

The Story Called Quantum Genocide

The story covers two important technologies:

Quantum computing

Autonomous weapons

A mad scientist used quantum computing to break the security of Bitcoin wallets and stole every single Bitcoin from around the world

He used it to create autonomous weapons, which are tiny drones that could be held in one’s palm

In the story, he used to assassinate the elites in the world

Quantum computing holds all possibilities open instead of “yes” and “no” in classical computing

Quantum computing has qubits, which can take on any value and be tried simultaneously

Is suitable to model things in the real world because of the relationship with quantum mechanics

How Many Years Will It Take Till Quantum Processing Has An Impact On Other Technologies, Including AI?

In his book, he selected a 4000 qubits quantum computer as 4000 is approximately what it would take to break Bitcoin wallets

In the last few years, we have gone from a few qubits to 10s of qubits. Currently, we are around 200 qubits

Based on the IBM roadmap, we will reach 4000 qubits in 10-15 years time

People who work in the quantum field believe that 20 years is a reasonable time frame in which to build a 4000 qubit system

All information presented above is for educational purposes only and should not be taken as investment advice. Summaries are prepared by The Reading Ape. While reasonable efforts are made to provide accurate content, any errors in interpreting and summarizing the source material are ours alone. We disclaim any liability associated with the use of our content.